You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

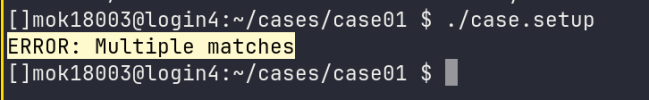

Error running ./case.setup

- Thread starter morpheus

- Start date

Information to include in help requests

When you are posting to the forums because you want help with an issue, please include as much of the following information as possible. Before submitting a help request, please check to see if your question is already answered: Search the forums for similar issues Check the CIME...

I followed the link for submitting a help request.

```bash

./describe_version > version_info.txt

```

See attached version_info.txt file.

Compiler version on HPC

```bash

module show netcdf-fortran/

```

```

-------------------------------------------------------------------

/cm/shared/modulefiles/netcdf-fortran/4.6.0-ics:

module-whatis {Adds netcdf-fortran/4.6.0-ics to your environment}

module load pre-module

conflict netcdf-fortran

module load hdf5/1.13.2-ics

module load netcdf/4.9.0-ics

setenv MOD_APP netcdf-fortran

setenv MOD_VER 4.6.0-ics

prepend-path PATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/bin

prepend-path LIBRARY_PATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/lib

prepend-path LD_LIBRARY_PATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/lib

prepend-path CPATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/include

prepend-path INCLUDE /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/include

prepend-path MANPATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/share/man

prepend-path PKG_CONFIG_PATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/lib/pkgconfig

module load post-module

```

CaseStatus file

See attached CaseStatus.txt file.

Case creation

See attached README.case.txt file.

These were the information I was able to gather. I get the following error after create any new case and try to run ./case.setup

I do not know what caused this issue but it started happening after I deleted a test case directory.

Any help would be greatly appreciated.

```bash

./describe_version > version_info.txt

```

See attached version_info.txt file.

Compiler version on HPC

```bash

module show netcdf-fortran/

```

```

-------------------------------------------------------------------

/cm/shared/modulefiles/netcdf-fortran/4.6.0-ics:

module-whatis {Adds netcdf-fortran/4.6.0-ics to your environment}

module load pre-module

conflict netcdf-fortran

module load hdf5/1.13.2-ics

module load netcdf/4.9.0-ics

setenv MOD_APP netcdf-fortran

setenv MOD_VER 4.6.0-ics

prepend-path PATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/bin

prepend-path LIBRARY_PATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/lib

prepend-path LD_LIBRARY_PATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/lib

prepend-path CPATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/include

prepend-path INCLUDE /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/include

prepend-path MANPATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/share/man

prepend-path PKG_CONFIG_PATH /gpfs/sharedfs1/admin/hpc2.0/apps/netcdf-fortran/4.6.0-ics/lib/pkgconfig

module load post-module

```

CaseStatus file

See attached CaseStatus.txt file.

Case creation

See attached README.case.txt file.

These were the information I was able to gather. I get the following error after create any new case and try to run ./case.setup

I do not know what caused this issue but it started happening after I deleted a test case directory.

Any help would be greatly appreciated.

Attachments

when you ran case.setup with --debug did it exit normally or did you have to ctrl-c to exit?

I still don't see what the issue is.

I think that the error is because some xml element is expected to have 0 or 1 settings and you are finding more than 1. However I can;t telll from what you've sent me what element that may be. When you run with --debug you should get a traceback.

I still don't see what the issue is.

I think that the error is because some xml element is expected to have 0 or 1 settings and you are finding more than 1. However I can;t telll from what you've sent me what element that may be. When you run with --debug you should get a traceback.

I exited with ctrl+c

Anyway, if I include the following snippet in my env_mach_specific.xml

<mpirun mpilib="default">

<executable>srun</executable>

<arguments>

<arg name="pmi">--mpi=pmix</arg>

<arg name="num_tasks"> -n {{ total_tasks }}</arg>

</arguments>

</mpirun>

I get the following error

ERROR: Multiple matches

but If I remove the mpirun section I get the following error

ERROR: Could not find a matching MPI for attributes: {'compiler': 'gnu', 'mpilib': 'openmpi', 'threaded': False}

I have attached the full env_mach_specific.xml file below

Anyway, if I include the following snippet in my env_mach_specific.xml

<mpirun mpilib="default">

<executable>srun</executable>

<arguments>

<arg name="pmi">--mpi=pmix</arg>

<arg name="num_tasks"> -n {{ total_tasks }}</arg>

</arguments>

</mpirun>

I get the following error

ERROR: Multiple matches

but If I remove the mpirun section I get the following error

ERROR: Could not find a matching MPI for attributes: {'compiler': 'gnu', 'mpilib': 'openmpi', 'threaded': False}

I have attached the full env_mach_specific.xml file below

Attachments

You should not edit the file env_mach_specific.xml - it's better to edit config_machines.xml and then rerun

./case.setup to regenerate the env_mach_specific.xml file. Have you tried replacing the mpilib="default" with mpilib="openmpi"?

Also when you exited with ctrl+c you should have seen a traceback on your term - that traceback would be helpful to see.

./case.setup to regenerate the env_mach_specific.xml file. Have you tried replacing the mpilib="default" with mpilib="openmpi"?

Also when you exited with ctrl+c you should have seen a traceback on your term - that traceback would be helpful to see.

Changing "default" to "openmpi" did not make a difference in the section of the xml

<modules mpilib="default">

<command name="load">openmpi</command>

</modules>

The following is the traceback I got after running ./case.setup --debug

[]mok18003@login6:~/cases/case01 $ ./case.setup --debug

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_entry_id.xsd /home/mok18003/cases/case01/env_case.xml

errput: /home/mok18003/cases/case01/env_case.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_entry_id.xsd /home/mok18003/cases/case01/env_run.xml

errput: /home/mok18003/cases/case01/env_run.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_entry_id.xsd /home/mok18003/cases/case01/env_build.xml

errput: /home/mok18003/cases/case01/env_build.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_mach_pes.xsd /home/mok18003/cases/case01/env_mach_pes.xml

errput: /home/mok18003/cases/case01/env_mach_pes.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_batch.xsd /home/mok18003/cases/case01/env_batch.xml

errput: /home/mok18003/cases/case01/env_batch.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_mach_specific.xsd /home/mok18003/cases/case01/env_mach_specific.xml

errput: /home/mok18003/cases/case01/env_mach_specific.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_archive.xsd /home/mok18003/cases/case01/env_archive.xml

errput: /home/mok18003/cases/case01/env_archive.xml validates

> /home/mok18003/my_cesm_sandbox/cime/scripts/lib/CIME/utils.py(126)expect()

-> try:

(Pdb)

<modules mpilib="default">

<command name="load">openmpi</command>

</modules>

The following is the traceback I got after running ./case.setup --debug

[]mok18003@login6:~/cases/case01 $ ./case.setup --debug

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_entry_id.xsd /home/mok18003/cases/case01/env_case.xml

errput: /home/mok18003/cases/case01/env_case.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_entry_id.xsd /home/mok18003/cases/case01/env_run.xml

errput: /home/mok18003/cases/case01/env_run.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_entry_id.xsd /home/mok18003/cases/case01/env_build.xml

errput: /home/mok18003/cases/case01/env_build.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_mach_pes.xsd /home/mok18003/cases/case01/env_mach_pes.xml

errput: /home/mok18003/cases/case01/env_mach_pes.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_batch.xsd /home/mok18003/cases/case01/env_batch.xml

errput: /home/mok18003/cases/case01/env_batch.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_mach_specific.xsd /home/mok18003/cases/case01/env_mach_specific.xml

errput: /home/mok18003/cases/case01/env_mach_specific.xml validates

RUN: /usr/bin/xmllint --noout --schema /home/mok18003/my_cesm_sandbox/cime/config/xml_schemas/env_archive.xsd /home/mok18003/cases/case01/env_archive.xml

errput: /home/mok18003/cases/case01/env_archive.xml validates

> /home/mok18003/my_cesm_sandbox/cime/scripts/lib/CIME/utils.py(126)expect()

-> try:

(Pdb)